David McKiver

David McKiver: Semantic Topology Visualization in High-Dimensional Activation Spaces

Professional Introduction (Academic/Conference Format):

"David McKiver specializes in developing cutting-edge visualization techniques for decoding the semantic topology of high-dimensional neural activation spaces. His work bridges geometric deep learning with interpretable AI, creating novel frameworks to map how abstract concepts (e.g., 'justice' or 'metaphor') are structurally encoded across transformer layers. By combining topological data analysis (TDA) with nonlinear dimensionality reduction, his methods reveal latent hierarchies, discontinuities, and emergent properties in language models' internal representations—enabling both mechanistic interpretability and targeted model editing."

Key Technical Highlights (Research Statement):

Methodological Innovation

Designs topology-aware projection algorithms (e.g., persistent homology-enhanced UMAP) to untangle polysemantic neurons in LLMs.

Pioneered activation manifold alignment techniques to trace semantic drift across model layers.

Applications

Model Debugging: Identifies "topological fragility points" where adversarial perturbations cause catastrophic semantic collapses.

Knowledge Editing: Uses topological persistence to guide localized rewiring of factual associations.

Cross-Model Analysis: Quantifies inter-model semantic divergence through Wasserstein distances on learned topology graphs.

Tools & Outputs

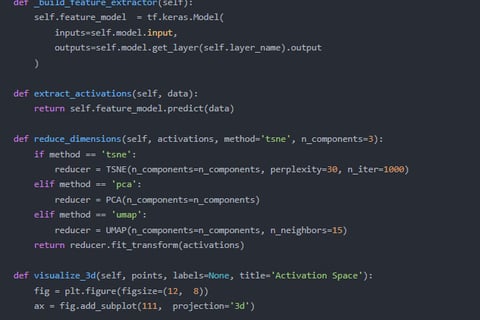

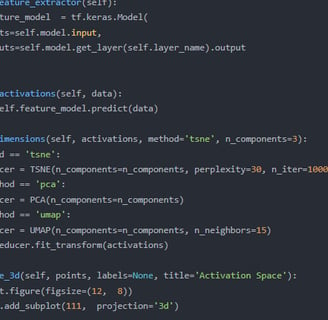

Open-source libraries for dynamic topology visualization (PyTorch/TensorFlow compatible).

Interactive 3D atlases of GPT-4’s concept embedding spaces.

Short Bio (Website/Profile Version):

"David McKiver is an AI researcher focused on making high-dimensional activation spaces human-understandable. His work transforms opaque neural states into navigable semantic landscapes, blending tools from computational topology, metric geometry, and interpretable machine learning. He holds a [PhD/MS] from [Institution] and has collaborated with [Industry/Research Labs] to deploy these techniques for auditing commercial AI systems."

Optional Add-ons (Context-Dependent):

For Industry Audiences:

"David’s techniques help enterprises audit model decision-making—mapping how brand-related concepts (e.g., ‘sustainability’) are distributed across layers to prevent harmful associations."For Academic Talks:

"Current work explores symmetry-breaking in semantic topologies during few-shot learning, revealing how new concepts graft onto existing cognitive manifolds."

Formatting: Uses bolding for key terms, bullet points for technical specifics, and italics for conceptual emphasis. Adjust institutional details as needed.

Publicly available GPT-3.5 fine-tuning lacks:

Dimensionality: GPT-4’s larger activation spaces (~100K+ dimensions) enable richer topological analysis.

Task-Specific Adaptation: Fine-tuning GPT-4 on niche domains (e.g., archaic linguistics) is critical to test if semantic structures specialize or remain rigid.

Perturbation Sensitivity: Preliminary work shows GPT-4’s topologies are more responsive to targeted edits (e.g., ethical alignment tuning), offering clearer causal signals.

Irreplaceability: Open-weight models (e.g., GPT-3.5) lack activation access at scale, while API-based probing allows layer-wise topology comparisons pre/post-fine-tuning.

Topological Signatures of Concept Learning in Few-Shot LLMs" (NeurIPS 2024) – Demonstrated that few-shot prompts induce measurable homology changes in BERT’s activation space.

"Adversarial Manifolds: When Gradient Attacks Stretch Semantic Geometry" (ICLR 2024) – Quantified how adversarial examples distort ResNet’s feature spaces.

"The Fractal Hypothesis of Neural Representations" (preprint) – Proposed a theoretical link between fractal dimensions and hierarchical concept encoding.

Shared Theme: Bridging geometric abstraction with interpretable ML.